Behind the Scenes: Crafting Scalable Platform and Multichannel Applications for SaaS Platforms

Building for multiple channels isn't a technical challenge first. It's a product strategy challenge.

Across my work at Microsoft, Egnyte, Passport Labs, and now through Gradient Advisory — one pattern holds across every platform build I've been part of: teams that treat multichannel as an engineering problem from the start almost always end up rebuilding it.

The ones that get it right start with a clear picture of who they're building for, what those users are actually trying to accomplish, and only then figure out which channels need to exist and how they should connect.

Here's how I think about it.

Start With the Job to Be Done — Not the Channel List

Before deciding whether you need a web app, a mobile experience, a voice interface, or all three — you need to understand the job your customer is trying to do, and where in their workflow they're trying to do it.

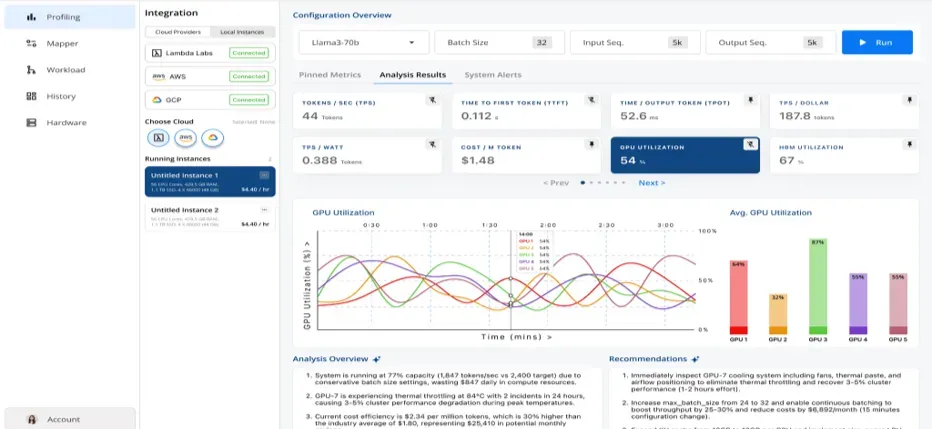

This isn't abstract. At Runara.ai (formerly AllyIn.ai), where I worked with the founding team on early product strategy, the ICP included data center professionals, supply chain operators, and procurement teams managing AI infrastructure. These aren't personas who sit at a desk refreshing a dashboard all day. They're in the field, context-switching constantly, needing information surfaced at the right moment in the right format.

That insight shaped the entire interaction framework — before a single line of code. It told us that a web-only experience would underserve the use case, that a conversational layer wasn't a nice-to-have, and that the channel architecture had to support both structured data views and natural language queries depending on context.

Define the job to be done first. The channel decisions follow once initial product is ready.

Build the Interaction Framework Before the Feature List

Once you understand the user's job and environment, the next step is mapping the interaction architecture — what I call the IA layer: how users move through the product across stages, what each stage needs to accomplish, and where friction is most costly.

For Runara, working with the CTO, we designed a staged journey:

Discovery and onboarding — how does a data center operator or procurement lead land in the product and orient quickly without a manual?

Core workflow — what does the daily or weekly usage loop look like for their specific role?

Escalation and exception handling — when something goes wrong at the inference layer or cost threshold, how does the product surface that signal and guide action?

Layering an agentic and conversational interface on top of that structured journey — using LLMs, RAG pipelines, and voice capabilities via ElevenLabs — only worked because the underlying interaction framework was already clear. The AI layer amplified the experience; it didn't substitute for thinking through the UX fundamentals.

This sequencing matters. Multimodal and agentic features built on top of an unclear IA create confusion at scale.

Platform Architecture Has to Match the Go-to-Market Reality

Technical architecture decisions and GTM decisions are not separate conversations. They need to happen in parallel.

At Egnyte, building cloud storage and collaboration integrations for enterprise customers meant the platform had to be reliable enough to support M365 and Google Workspace workflows that 99% of enterprise users depend on daily. Latency, uptime, and data residency weren't just engineering concerns — they were sales blockers or enablers depending on whether we got them right.

At Passport Labs, IoT and curb management integrations meant dealing with real-time data streams from physical infrastructure. The architecture had to handle intermittent connectivity, edge processing, and multi-system orchestration — and that directly shaped which ISV partnerships were viable and which weren't.

The principle is consistent: your cloud infrastructure strategy, your API design, and your native integration decisions, evals or realiability, security should all be grounded in the specific reliability and performance bar your target customers require — not a generic "scalable architecture" aspiration.

AI/ML Integration Needs a Clear Value Hypothesis

Adding AI and ML to a SaaS platform is not a differentiation strategy on its own. The question is always: what specific decision or workflow does this make faster, more accurate, or less effortful for the user?

At Runara, the value hypothesis was concrete: real-time telemetry and intelligent orchestration to reduce AI inference costs and surface performance anomalies before they compound. That's a measurable outcome. It gave the product team a clear test for every AI feature — does this move that needle, or is it capability for its own sake?

The integrations that drove real engagement were the ones tied directly to that hypothesis: inference cost visibility, drift alerts, and model performance comparisons surfaced in the workflow where operators actually make decisions.

AI/ML earns its place in a platform when it's anchored to a specific, testable value hypothesis. Without that, it becomes the most expensive way to add complexity.

Close the Loop: Agile, Post-Launch, and the ARR Connection

Product strategy doesn't end at launch. The builds I've seen scale successfully all had one thing in common: a structured feedback loop that connected post-launch usage data directly back to product and partnership decisions.

In practice, that means:

Activation and workflow metrics — not just DAU/MAU, but are users completing the core job the product was designed for?

Feedback channels across CS, sales, and support — systematically fed into a product iteration cycle, not collected and forgotten

Partner and channel signals — are integration partners seeing adoption? Where are the handoffs breaking down?

At Runara, this loop informed both the product roadmap and the GTM positioning. Customer and channel feedback shaped which use cases to lead with in partner conversations, which in turn influenced which integrations to prioritize next. The cycle between product iteration and ARR growth only works when the feedback infrastructure is built deliberately — not assembled after the fact.

What This Looks Like in Practice

Stepping back, the through-line across every multichannel platform build I've worked on is this:

User clarity → Interaction framework → Architecture decisions → AI/ML with a clear hypothesis → Post-launch feedback loop

Each stage depends on the one before it. Skipping steps doesn't accelerate the build — it creates rework.

The platforms that scale aren't necessarily the ones with the most channels or the most integrations. They're the ones where every channel decision, every integration, and every AI feature traces back to a clear picture of what the customer is trying to do — and a disciplined process for measuring whether you helped them do it.

If you're working through a multichannel platform build or evaluating your AI integration strategy, I'm happy to compare notes — drop a comment or reach out directly.