Why Your AI Agent Demos Don't Survive Production

Most agents don’t fail at the prototype phase. They fail in production.

I’ve shipped AI products at Microsoft, Passport Labs, Wells Fargo lab across B2X SaaS — and the same pattern shows up every time. A demo wows the room. Then the agent hits a real CRM, tries to update a live ticket, searches a secure file system — and falls apart. It confidently says it has done the part, but the artifact is nowhere to be found, which is what I commonly saw with the Microsoft Co-Pilot in M365 apps, too.

Here’s what I’ve learned about what actually separates a demo from a production-worthy system.

Not Every Workflow Should Be Agentic

Before anything else, you need to be honest with yourself about use-case fit.

Great candidates: multi-step automation with clear success criteria, deterministic flows, tool-driven tasks, low blast radius. Think ticket triage → fix, API data sync, document extraction → validate → update.

Bad candidates: irreversible actions, high-stakes decisions, anything requiring tacit expertise. I tried applying agents to complex legal and clinical judgment tasks (GDPR, RFI workflows) — we had to narrow the scope to sub-use cases before it was usable.

The question isn’t “can an agent do this?” It’s “can we measure whether it did it correctly?”

Evals Are the Product

This is the hardest mindset shift for most teams. Evals and monitoring aren’t something you add before launch — they are the product.

Think through the eval stack:

Accuracy — task success rate, precision/recall for extraction

Reliability — MTBF, tool call error rate

Latency — p50/p95/p99 response times

Cost — $ per completed task

Safety — policy violation rate, unsafe action count

Drift — input distribution shift, embedding mismatch

Trust — user satisfaction, override rate by human operators

Each one maps directly to an architectural decision you made earlier — planner logic, tool schema, memory strategy, safety layer. If you haven’t defined pass/fail for each layer, you’re flying blind.

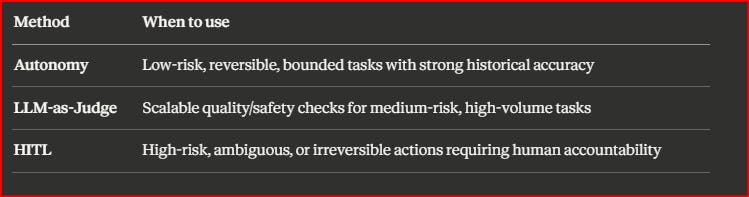

When to Use Autonomy vs. LLM Judge vs. Human-in-the-Loop

This is where teams waste the most time debating instead of deciding.

Here’s my rule of thumb:

In practice? Most production systems use dual-review — LLM judges handle the high-volume, low-risk checks while human reviewers focus on high-risk or high-ambiguity cases where liability or domain expertise actually matters.

The Failure Modes No One Talks About

I’ve been building long enough to have hit all of these.

Ambiguous user inputs are more dangerous than they look. A support agent sees: “This is not working, fix it.” → The agent tries multiple tools, retries, reasoning trace balloons, and token costs spike. Vague inputs are one of the most common paths to cascading failure. I have seen it blow up my N8N tokens in a single session!

Upstream API failures compound fast. When rolling out an M365 Cloud Storage and collaboration project past beta at one of the B2B Collaboration Platforms, as a Principal Tech PM, 3rd-party API slowness caused data corruption and rising customer issues. We caught it early in Beta as Security and data sovereignty were first core principles — but at scale, you need fault tolerance baked in from the start, not bolted on, and it requires holistic treatment from infrastructure, orchestration, to application and UX layer, alongside active health checks with the Partner services.

Multi-agent coordination is the hardest thing to debug. I see it constantly — in n8n, in Cursor, even in Co-pilot, in production systems. Agents stepping on each other’s state, conflicting tool calls, infinite delegation loops (”Agent A asks Agent B who asks Agent A...”), confidently saying something is done, with no artifacts to be found anywhere. Even when all the APIs are healthy, coordination failure is a top-tier reliability risk - apparently, Co-Pilot, in one case, admitted after 3 tries, that it doesn’t have write access to M365 apps and can’t do certain things which it said it did before.

Dataset drift is sneaky. In my experience with MLOps at Wells Fargo’s Innovation Lab, upstream credit/merchant data changes caused models to drift in two ways: unforeseen parameters affecting model weights (requiring fine-tuning) and data staleness (more manageable with RAG and regular updates). The first is expensive. The second is survivable if you plan for it. BTW, MLOps experience with data and model monitoring does translate to LLMops, which is still an evolving field.

Monitoring Is Not Optional

Once you’re in production, you need:

Product metrics: task success rate, policy violation count, data drift signals

Engineering metrics: tool error rate, confidence vs. accuracy calibration gap, latency p99

Business metrics: cost per session, cost per 1K tokens, API spend

And governance isn’t bureaucracy — it’s structured telemetry, replay/rollback support, audit logs, and alerting rules tied to safety, cost, and performance thresholds. If you don’t have trace IDs and input snapshots, you can’t do post-mortems.

I also advocate to treat UI and the app layer, and establishing trust with the key foundation of CX thinking. Apply first principles - transparency and clarity around what the agent can do, can’t do, showing proof-point on how it reasoned, or even citations, and in one of the procurement flows, the client challenged us on our open source LLM models, and how we delivered better than generic models like ChatGPT, we showed side-by-side comparison across key dimensions.

The Framing I Use

Think in Agentic PDLC terms: design → evals → monitoring → rollout → governance.

Roll out deliberately: internal alpha → controlled beta → gradual ramp → full launch. Watch canary signals — safety violations, task-success deltas, user-friction spikes.

According to Deloitte’s 2025 Tech Trends report, only 1 in 10 organizations has agents in production. 38% are still running pilots. The gap between those groups is almost always about evaluation discipline, not model capability.

Robust evals aren’t a nice-to-have. They’re what reduces rollback cost, catches failure modes early, and lets you scale without incident.

If you’re building agentic systems and want to compare notes, I’m happy to dig into specifics — drop a comment or reach out directly.